Small Retailer

- Home

- Industries

- QSR

- Technology

Why Now Possible

Vision AI has moved from counting people to measuring operations - reliably, at scale, and at lower deployment cost.

Empowered by Vision AI

Advances in AI models, wide-angle imaging, and edge processing now allow defined behaviours to be detected and converted into measurable operational metrics across entire environments.

Behaviour, Not Just Movement

Earlier systems tracked motion. Now, AI models detect defined behaviours within defined zones:

- Queue forming vs dispersing

- Table occupied vs vacant

- Task completed vs missed (e.g. packing, cleaning)

This is not open-ended interpretation.

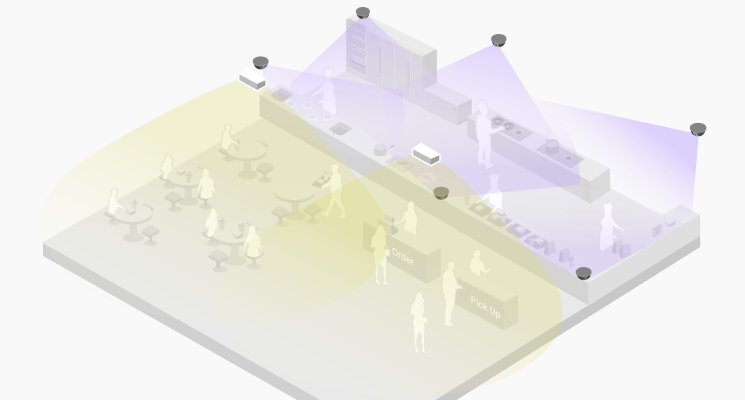

Wide Coverage Economics

Fisheye and wide-angle optics enable full-area visibility:

- Entire queue zones

- Seating areas

- Kitchen stations

Instead of multiple narrow cameras,one device can cover a full operational zone (~3,000–4,000 sq ft).

Real-World Conditions Are Now Solvable

Previous systems failed in real environments:

- Occlusion in crowds

- People carrying trays / objects

- Children, groups, irregular movement

Modern models are trained on dense, real-world scenarios, enabling:

- Stable queue measurement

- Reliable tracking in cluttered scenes

- Differentiation of roles (e.g. staff vs customers via uniform cues)

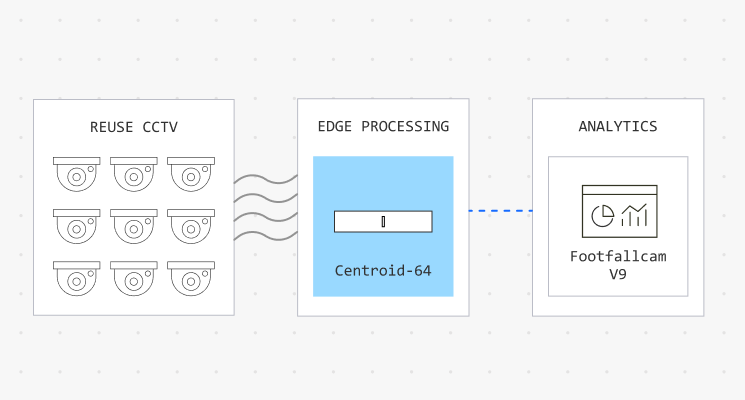

Edge + CCTV Reuse Makes It Deployable

Two key enablers remove deployment friction:

Edge Processing

- Data processed locally

- No heavy cloud dependency

- Lower latency, controlled bandwidth

CCTV Reuse

- Existing cameras can be analysed

- Supplement only where needed

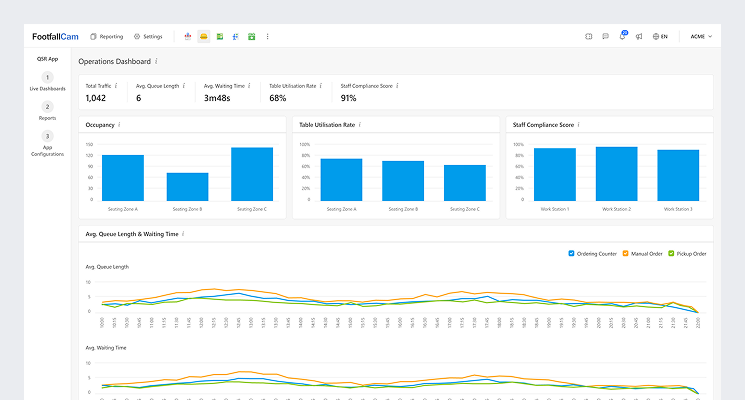

From Observation to Score

All detected states are converted into structured metrics:

- Queue length → time-based distribution

- Table occupancy → utilisation rate

- Task completion → compliance score

These are not raw signals.They become standardised variables that can be:

- Compared across stores

- Compared across time (hour / day / campaign)

- Compared across teams

What was invisible is now measurable.

Operations are no longer inferred - they are measured. Execution is no longer sampled - it is continuously visible. Performance is no longer subjective - it is comparable across locations, teams, and time.

Ready to learn more?

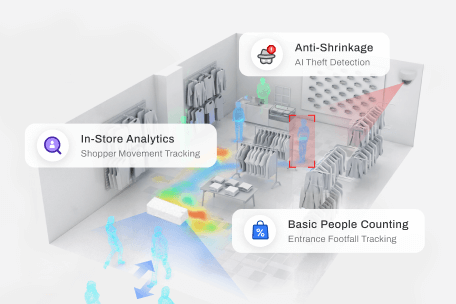

Deployment Examples

Selecting the appropriate functions and devices to serve your specific purpose is crucial. To get started with your own designs, it can be helpful to review deployments of similar systems in other malls for inspiration.

Copyright © 2002 - 2026 FootfallCam™. All Rights Reserved.

Cookies Notification

We use cookies to ensure that we offer you the best experience on our website. By continuing to use this website, you consent to the use of cookies.

Select Your Language

Please select your prefer language.