Small Retailer

From Vision to Behavioural Understanding

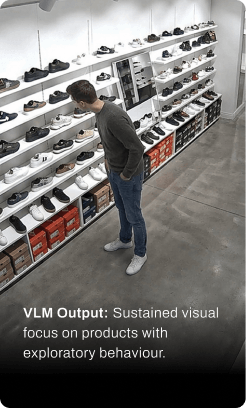

Traditional video analytics measure where people are and how long they stay. This creates useful signals, but they remain interpretations. A person standing in front of a shelf for twenty seconds may be browsing, waiting, distracted, or not engaged at all.

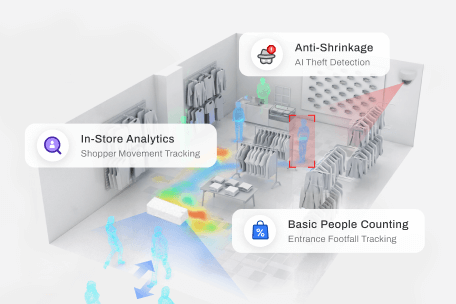

FootfallCam introduces a new generation of video analytics that understands context - what people are doing, how they interact, and what it means for your business.

Business Usefulness in Retail

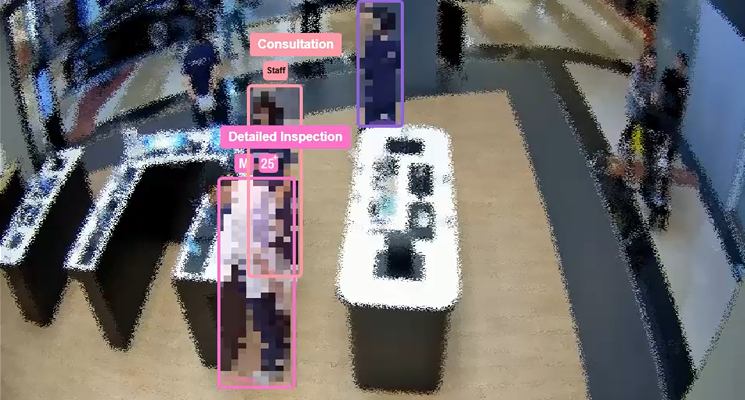

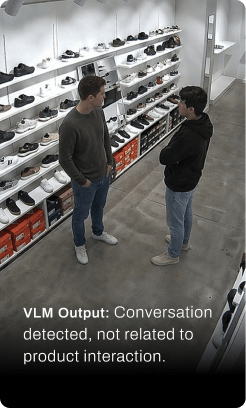

Instead of relying on time thresholds or proximity alone, the system interprets behaviour - body orientation, hand movement, interaction patterns, and situational context. It distinguishes between a shopper actively considering a product, a casual glance, a staff member restocking, or a person simply passing time. The result is not a guess, but a classified outcome aligned to real-world behaviour.

Area |

Applications |

Value to Business |

|

Customer Engagement |

Detects real interactions between customers and staff |

Evaluate service quality and staff responsiveness |

|

Merchandising Impact |

Understand browsing patterns and dwell intensity |

Measure true product engagement beyond footfall |

|

Checkout Service |

Classifies greeting, serving, upselling, and handover moments |

Measure service quality in a consistent, auditable way |

|

SOP Adherence |

Monitors defined service behaviours and process steps |

Improve operational discipline and training |

|

Validation & Coaching |

Stores reviewable event clips and classified outcomes |

Turn observations into evidence, coaching, and improvement |

How It Works in Practice

Metrics: Contextual, Not Just Movement

Contextual Categorisation

VLM refines what a person is doing within a detected zone or interaction:

- Shelf zone: glance, browse, active product interest

- Staff interaction: asking, being served, upsell interaction

- Checkout: payment, completion, handover

This turns a simple “20 seconds in front of shelf” into a defined behaviour category.

Built-in Validation

The same mechanism verifies whether an observed action is genuinely meaningful:

- Distinguishes shopper engagement vs staff restocking

- Filters out idle or irrelevant presence

- Confirms whether interaction actually occurred

This removes ambiguity from traditional metrics and increases trust.

Why This Matters

Moves from assumption → evidence

Reduces false interpretation of dwell time and presence

Provides verifiable context, not just positional data

Enables consistent measurement of real-world behaviour

Classifying Shopper Behaviour

The system identifies and distinguishes subtle differences in posture, attention, and interaction to classify behaviour types, from non-engagement to active product interaction and staff engagement, enabling structured interpretation of shopper movement and intent.

AI-generated illustrations only; no real customer data, surveillance footage, or personal information is used or captured.

Engagement metrics:

Phone Distraction

Engagement metrics:

Casual Glance

Engagement metrics:

Active Browsing

Engagement metrics:

Deep Product Interaction

Engagement metrics:

Talking to Friend

Engagement metrics:

Talking About Product

The Future of In‑Store Understanding

After visitors enter, zone counting shows where people actually go inside the mall.

Zones are commonly defined as:

- Major wings (for example, East / West)

- Atriums

- Key corridors between anchors